- Superintelligence

- Posts

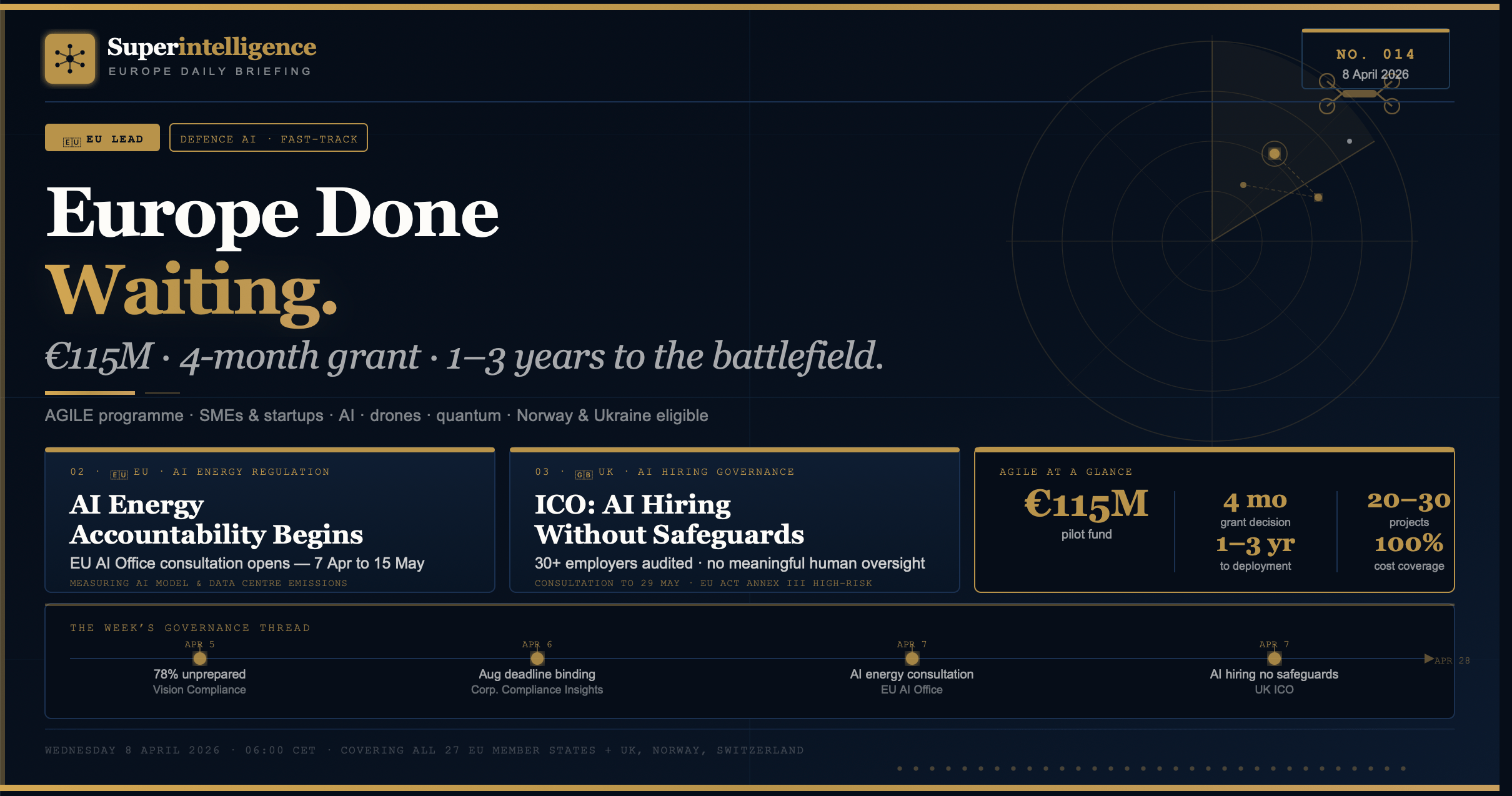

- Superintelligence Europe — No. 014

Superintelligence Europe — No. 014

The EU's AGILE programme puts €115M into defence AI, drones, and quantum — with a four-month grant clock. The EU AI Office opens its first consultation on AI energy consumption. The UK ICO finds most major employers running AI hiring without legal safeguards. And OpenAI's economic policy paper lands in European policy circles.

Everything that moved in European AI on Tuesday 7 April · EU · UK · Defence · Energy · Governance | ||||

| ||||

Issue No. 014 — Wednesday, 8 April 2026 Tuesday’s European AI story had a distinctly different tone from the infrastructure announcements and funding rounds of the previous week. The EU published its most detailed English-language analysis yet of the AGILE defence AI programme — a €115 million fast-track fund to move AI, autonomous systems, drones, and quantum from research labs to armed forces in one to three years, with funding decisions in four months rather than years. In Brussels, the European AI Office simultaneously opened a targeted consultation on measuring the energy consumption of AI models and data centres. In London, the ICO’s “Recruitment Rewired” report drew its widest media attention of the week, with the regulator finding that most major employers are running automated hiring decisions without adequate human oversight or legal safeguards — and launching a formal consultation on new rules. And in Washington, OpenAI published a sweeping economic policy paper with direct implications for how European governments frame the AI-and-labour question. Defence AI, energy accountability, hiring rights, and the economics of intelligence. Your Wednesday morning briefing starts here. | ||||

| ||||

Lead · EU-wide · Defence AI / Autonomous Systems 01The EU’s AGILE programme puts €115 million into defence AI, drones, and quantum — with a four-month grant clock and a one-to-three year deployment target. Europe is done waiting. Sources: Euronews · Defence Industry Europe · European Commission · 7 April 2026 Euronews published its most detailed analysis yet of the Programme for Agile and Rapid Defence Innovation — AGILE — on Tuesday, bringing the European Commission’s March proposal into the widest English-language circulation since its announcement. The programme commits €115 million as a pilot to fast-track disruptive defence technologies from the lab to operational armed forces use. Its primary focus areas are AI systems for military decision-making and situational awareness, autonomous systems, drone technologies, quantum computing, and advanced robotics. Unlike the EU’s existing defence innovation frameworks — the European Defence Fund, EUDIS, EDIP — AGILE is explicitly designed around speed rather than process. Grants will cover up to 100 percent of eligible costs. Funding decisions are expected within four months of application. Technologies are expected to reach armed forces within one to three years of award. The programme is designed specifically for the “new defence players” — start-ups, scale-ups, and SMEs — that move at commercial technology speed rather than traditional defence procurement pace. Between 20 and 30 projects are expected to receive individual grants of €1 million to €5 million. Companies from EU member states, as well as Norway and Ukraine, will be eligible to apply when the first call opens early 2027. EU Defence Commissioner Andrius Kubilius framed the programme explicitly: Europe can no longer rely on defence innovation cycles measured in years when modern warfare now depends on technologies that need to be developed, tested, and deployed in weeks or months. Ukraine’s war has made that reality visible. AGILE is the institutional response. The Commission described the programme as designed for “the ‘New Defence’ players, the startups and tech innovators who move at high speed” — a deliberate signal that AGILE is not intended for the large traditional defence primes that have historically dominated EU funding. The administrative burden has been reduced: single companies can apply without forming multinational consortia, retroactive funding is permitted, and grants cover up to 100 percent of eligible costs.

| ||||

Regulation · EU-wide · AI Energy / Environmental Impact 02The European AI Office opens a targeted consultation on measuring AI energy consumption and emissions — the first formal EU regulatory step toward quantifying the environmental cost of AI Source: European AI Office · Consultation open 7 April – 15 May 2026 The European AI Office opened a targeted consultation on Tuesday seeking input on how to measure and mitigate the energy footprint of AI models and data centre systems. The consultation window runs from April 7 to May 15, 2026. This is the first formal EU regulatory step toward establishing a measurement methodology for AI energy consumption — a question that has been present in policy discussions since the emergence of large language model training but has not previously been the subject of a targeted Commission consultation. The timing connects directly to Monday’s Futura-Sciences analysis of France’s ADEME data: 352 data centres consuming 2.2 percent of France’s national electricity, with a trajectory to 500 by 2030. The ADEME report made visible the environmental cost of European AI infrastructure at the national level. The EU consultation is the regulatory mechanism for addressing that cost at the continental level. The two are not coordinated — ADEME is a French national agency, the EU AI Office is a Commission body — but they arrived on consecutive days and point at the same underlying tension: European AI’s sovereignty goals and its environmental commitments are increasingly in conflict, and someone needs to quantify the trade-off before it can be managed. What the Consultation Is Asking The targeted consultation seeks input on: how energy consumption of AI models and systems should be measured; which metrics and methodologies are technically feasible; how emissions associated with AI training and inference should be reported; and how mitigation requirements should be structured within the AI Act’s existing framework. The consultation closes May 15, 2026 — 38 days before the Omnibus’s proposed August 2, 2026 extension target. The results will feed into the Commission’s ongoing AI Act implementation work and potentially into the next revision cycle. Any organisation operating AI infrastructure in the EU — including data centre operators, cloud providers, and AI developers — has a direct interest in the outcome. | ||||

Governance · United Kingdom · AI in Hiring / Data Protection 03The UK ICO audited more than 30 major employers and found most are running AI hiring decisions without adequate human oversight or legal compliance. “Recruitment Rewired” demands action. Sources: ICO official — Recruitment Rewired · Staffing Industry Analysts · Published late March / covered 7 April 2026 The UK Information Commissioner’s Office published “Recruitment Rewired” — the most comprehensive regulatory audit of AI in UK hiring to date — and it drew its widest attention on Tuesday as Staffing Industry Analysts and UK tech media amplified the findings. The ICO engaged with more than 30 major employers between March 2025 and January 2026 to understand how automated decision-making is being used in recruitment. Its key finding: many employers are relying on solely automated decisions — systems operating without meaningful human involvement — to make or significantly influence hiring outcomes. That places those decisions within the scope of UK GDPR’s sole automated decision-making provisions, requiring a range of safeguards that the ICO found are frequently absent. The range of AI tools identified in use is wider than most public discussion has acknowledged. Beyond CV screening and ranking, employers are using AI-powered behavioural games and psychometric assessments, sentiment and emotional analysis of interview transcripts, personality prediction from tone and language patterns, and scoring of written responses to interview questions. The ICO wrote to 16 organisations identified as likely to be running solely automated decisions affecting candidates, and all 16 have now committed to acting on the recommendations. Separately, the ICO launched a consultation on updated draft guidance on automated decision-making and profiling, open until 29 May 2026 — running concurrently with the EU AI Office’s energy consultation.

| ||||

04 · Signal — Verified Voices, Tuesday 7 April Credible accounts and publications that shaped Tuesday’s European AI conversation. Filtered for genuine signal.

The Week’s Governance Thread Looking across the week: Vision Compliance (78% of firms unprepared, April 5) → Corporate Compliance Insights (August 2026 deadline is binding, April 6) → EU AI energy consultation opens (April 7) → ICO finds AI hiring running without safeguards (April 7). The governance thread connecting all four stories is identical: European AI is deploying faster than the accountability infrastructure designed to govern it. Each story is a different expression of the same underlying gap — between what AI systems are doing in practice and what the regulatory frameworks assume they are doing in theory. The April 28 Omnibus trilogue, now three weeks away, is the moment where at least the compliance timeline question gets answered. The operational readiness question will take longer. |

Turn AI into Your Income Engine

Ready to transform artificial intelligence from a buzzword into your personal revenue generator?

HubSpot’s groundbreaking guide "200+ AI-Powered Income Ideas" is your gateway to financial innovation in the digital age.

Inside you'll discover:

A curated collection of 200+ profitable opportunities spanning content creation, e-commerce, gaming, and emerging digital markets—each vetted for real-world potential

Step-by-step implementation guides designed for beginners, making AI accessible regardless of your technical background

Cutting-edge strategies aligned with current market trends, ensuring your ventures stay ahead of the curve

Download your guide today and unlock a future where artificial intelligence powers your success. Your next income stream is waiting.