- Superintelligence

- Posts

- Superintelligence Europe — No. 006

Superintelligence Europe — No. 006

Munich Re puts a number on AI-powered cybercrime. Version 1 opens Dublin's new AI studio. 27 EU states, no easy enforcement fix. Your Thursday briefing.

Everything that moved in European AI on Wednesday 25 March · Across all 27 EU member states | ||||

| ||||

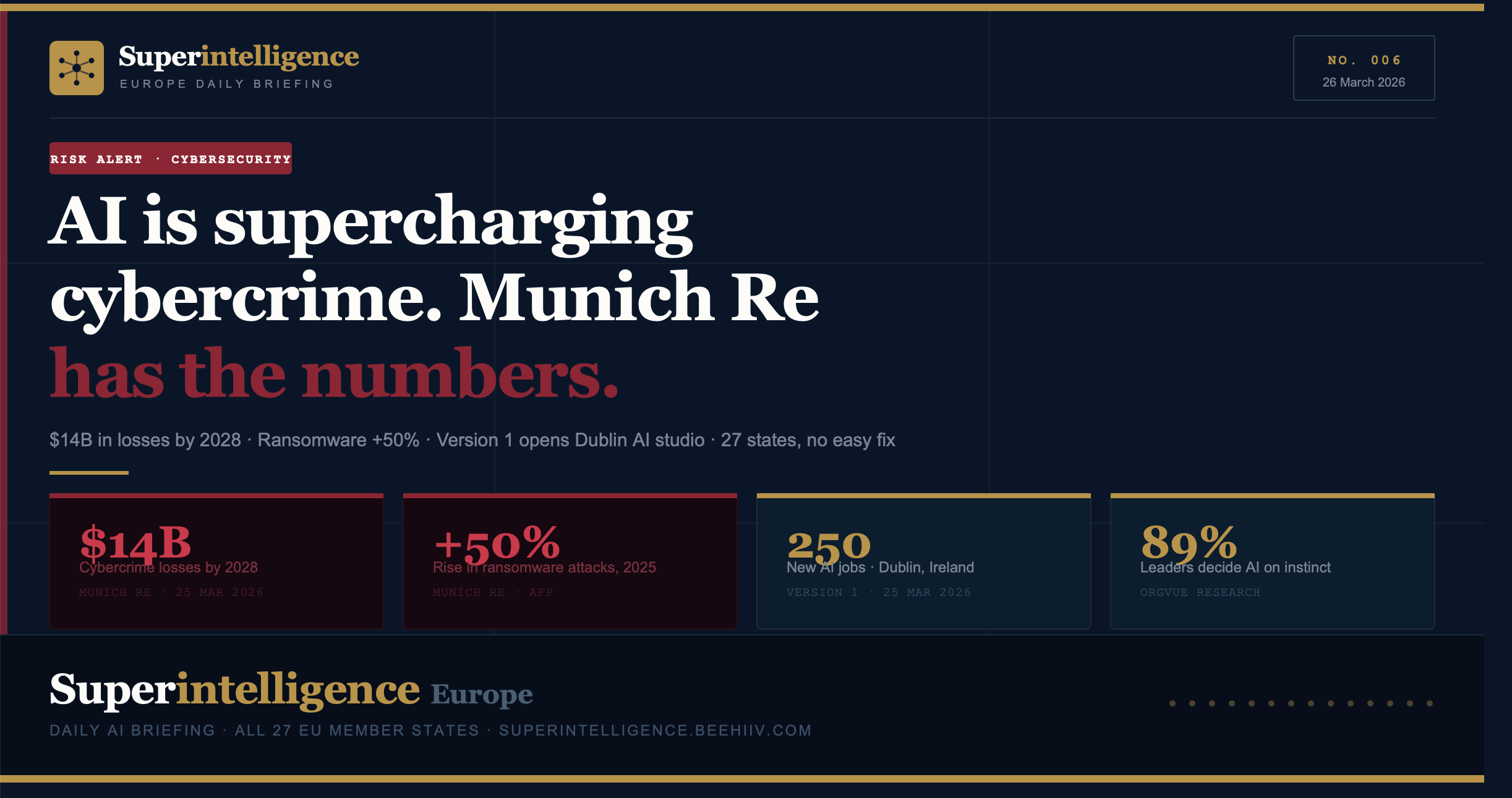

Issue No. 006 — Thursday, 26 March 2026 Wednesday was a day of warnings. Munich Re put the hardest numbers yet on what AI-powered cybercrime is costing European businesses — and what it will cost by 2028. Ransomware attacks rose nearly 50 percent in 2025 and show no sign of slowing. Agentic AI is now a tool for attackers, not just defenders. Separately, research published Wednesday found that 89 percent of senior leaders are making critical AI deployment decisions on instinct rather than data — a finding that connects directly to why so many European AI transformations are stalling. Ireland gave the week its most concrete good news: Version 1 opened a new Dublin AI studio and announced 250 jobs, with two government ministers attending the launch. And from Brussels, a sobering MLex report confirmed what many have suspected: the 27 member states have no easy path to coordinated AI Act enforcement. Risk, talent, governance, and a quiet innovation from Latvia. Six stories. Your Thursday morning briefing starts here. | ||||

| ||||

Lead · Germany / EU-wide · Cybersecurity 01Munich Re: AI is making cyberattacks more sophisticated, more targeted, and vastly more costly — and the numbers are accelerating Sources: AFP / Munich Re report · 25 March 2026 Munich Re, the German reinsurer and one of the world’s most authoritative voices on quantified risk, released a major cybersecurity report on Wednesday projecting that cybercrime will generate global losses of approximately $14 billion by 2028. The report frames AI not as a future threat but as an active force already reshaping the economics of attack: automation now enables criminals to operate at greater scale and precision simultaneously, with highly personalised phishing campaigns, automatically generated malware, and synthetic identities that are increasingly indistinguishable from real ones. The data on ransomware is particularly stark. The number of publicly reported attacks rose by nearly 50 percent in 2025 and shows no sign of abating in 2026. Coordinated attacks via networks of hijacked devices more than doubled in the same period, driven in part by the growing availability of attack-as-a-service tools. At the most sophisticated tier, criminal groups are collaborating with nation-state actors, concealing the origin of operations and accelerating their global reach. Martin Kreuzer, head of cyber risks at Munich Re, told AFP that “automation now plays a central role” — enabling attackers to be both more efficient and more targeted than previous generations of cybercriminals. The Scale of the Problem “If cybercrime were a country, it would be the third-largest economy in the world — behind only the United States and China.” — Munich Re report, released 25 March 2026 The Agentic AI Risk No One Is Talking About The Munich Re report introduces a dimension that most European AI policy discussions have ignored: agentic AI as an attack vector. Systems that can act autonomously, make decisions, and circumvent defensive mechanisms are being deployed by criminal actors faster than enterprise security teams are adapting. The EU AI Act classifies certain autonomous systems as high-risk — but its enforcement framework addresses deployment, not weaponisation. The gap between what the Act covers and what attackers are actually doing is widening. | ||||

Jobs & Investment · Ireland · AI Skills 02Version 1 opens a state-of-the-art AI studio in Dublin and creates 250 jobs — with two government ministers at the launch Irish technology company Version 1 opened its new Dublin headquarters and AI studio at Four Park Place on Wednesday, announcing 250 new jobs across the company’s operations. The launch was attended by Minister for Enterprise Peter Burke and Minister of State for AI and Digital Transformation Niamh Smyth — a level of political attendance that reflects how central AI talent retention has become to Irish industrial strategy. The 250 Dublin roles come on top of 400 positions already announced for Northern Ireland, and form part of a broader £40 million UK investment plan. Version 1, which marks its 30th anniversary this year, now employs 3,700 people globally with revenues exceeding €400 million. The AI studio has been designed as a co-creation space for enterprise clients, but crucially will also be open to schools, universities, and community groups — a deliberate move to frame AI capability as a public good rather than a proprietary asset. CEO Roop Singh said the company’s core belief is that AI enhances human capability rather than replacing it. Ireland’s AI Talent Play Dublin is competing directly with London and Edinburgh for AI talent and enterprise AI capability. Version 1’s decision to plant its AI studio in Dublin — not a UK city — is a significant signal. The presence of two ministers at the opening reflects political awareness that AI job creation is now a visible and contested metric of national economic competitiveness within Europe. | ||||

Governance & Enterprise · EU-wide 03 EU AI Act enforcement: 27 member states, 27 different approaches, no easy fix EU-wide · MLex · 25 Mar A detailed MLex report published Wednesday documented what practitioners have been observing for months: EU member states are struggling to build coherent national enforcement structures for the AI Act, with fragmented regulatory systems, differing institutional designs, and capacity constraints all preventing the kind of coordinated cross-border supervision the Act requires. The AI Act’s high-risk obligations apply from August 2026 — five months away. A third of member states had not even designated their national competent authorities by the August 2025 deadline. No single governance model has emerged across the 27 states. Communications regulators anchor some; data protection authorities lead others. The fragmentation is structural, not incidental. 04 89% of leaders decide AI strategy on instinct. The cost is measurable. EU-wide / Global · Orgvue / PR Newswire · 25 Mar New research from workforce analytics firm Orgvue found that 89 percent of senior leaders rely on instinct rather than data when making key decisions about AI deployment. The finding directly explains a pattern visible across European enterprise: organisations that announce AI transformations and then fail to deliver them are not typically failing on technology — they are failing on decision architecture. The Orgvue research connects directly to the AWS adoption report covered in Issue 005: Europe has 54 percent AI adoption but only 22 percent advanced use. The gap is not about tool availability — it is about how leaders are making deployment decisions. When nine in ten executives are guessing, it is unsurprising that most AI projects plateau at basic use. | ||||

Innovation · Latvia / Finland · Legal AI & Ecosystem 05From Riga to Helsinki: smaller EU states show what practical AI deployment looks like Sources: Labs of Latvia · DAIN Studios · 25 March 2026 Two smaller EU member states produced concrete AI deployment stories on Wednesday that rarely surface in continent-wide coverage. In Latvia, a new AI-powered platform called ECLI.ai was announced — designed to analyse court rulings and legal information at scale. The platform addresses a specific and growing problem: the volume of published European Court of Justice rulings has outpaced any realistic capacity for manual review, making AI-assisted legal analysis increasingly a practical necessity rather than a convenience. ECLI.ai uses the European Case Law Identifier system as its structural backbone, positioning it as a potentially exportable tool across EU legal jurisdictions. In Finland, DAIN Studios was selected as one of AI Finland’s 12 strategic partners for 2026, and will facilitate the focus group on AI agents and workflow redesign at the AI Finland Spring Forum in Helsinki — held on the same day as the announcement. The partnership signals Finland’s continued investment in structured, peer-learning approaches to enterprise AI adoption — a model that contrasts with the instinct-driven decision-making documented in Story 04. | ||||

06 · Signal — Verified Voices Credible accounts and publications that drove Wednesday’s European AI conversation. Filtered for genuine signal. @MunichRe Global reinsurer · Germany The Wednesday cybercrime report was the most-cited European AI risk document of the week. Munich Re’s authority as a risk quantifier — rather than a technology commentator — gave the figures unusual credibility in enterprise security, insurance, and policy circles simultaneously. The “third-largest economy” framing was the most-shared line. @RTEbusiness RTÉ Business · Ireland First to break the Version 1 jobs story on X at 6:58am, picked up by the Irish Times, Silicon Republic, and Business Post within hours. RTÉ Business remains the fastest Irish business newswire for AI employment stories — important for a market where government AI policy is closely tied to job creation optics. @LucaBertuzzi MLex · EU Tech Policy The byline on the AI Act governance coordination piece. Bertuzzi is the most consistently accurate reporter on EU AI Act implementation mechanics — his sourcing from national regulators and Commission officials gives his pieces a specificity that most Brussels tech coverage lacks. When he reports that there is no easy fix, it is not commentary. It is observation. |

The AI your stack deployed is losing customers.

You shipped it. It works. Tickets are resolving. So why are customers leaving?

Gladly's 2026 Customer Expectations Report uncovered a gap that most CIOs don't see until it's too late: 88% of customers get their issues resolved through AI — but only 22% prefer that company afterward. Resolution without loyalty is just churn on a delay.

The difference isn't the model. It's the architecture. How AI is integrated into the customer journey, what it hands off and when, and whether the system is designed to build relationships or just close tickets.

Download the report to see what consumers actually expect from AI-powered service — and what the data says about the platforms getting it right.

If you're responsible for the infrastructure, you're responsible for the outcome.