- Superintelligence

- Posts

- AI's voice & vision

AI's voice & vision

Hey friends! Welcome to the development of the AI world. Today's top AI news highlights OpenAI’s developer-focused tools, Copilot’s voice and vision, and Alibaba’s ACE visual creator. Additionally, meet Stanford University's Archon, a new inference framework designed to enhance large language models . Let’s dive in—enjoy this AI ride in just 4 minutes!

The AI World Today

OpenAI Unveils Developer-Focused AI Tools

Microsoft’s Copilot Gains Voice and Vision

Alibaba Launches ACE Visual Creator

Pinterest Introduces AI Ad Tools

+

Heads Up

AI Solution

OpenAI Introduces Cost-Saving Tools for Developers

Andrew Hsu, Open AI CTO Screenshot: Open AI/X

OpenAI's DevDay 2024 focused on empowering developers with incremental improvements rather than major product launches. Key innovations unveiled include Prompt Caching, which cuts costs for reused inputs by 50%, Vision Fine-Tuning for GPT-4o to enhance visual AI capabilities, a Realtime API enabling low-latency speech interactions, and Model Distillation to create efficient models based on advanced outputs. These tools aim to reduce costs, improve performance, and expand AI accessibility. OpenAI's shift towards ecosystem development marks a strategic move to foster innovation while addressing industry concerns around cost, resource efficiency, and scalability. This event highlights OpenAI’s focus on sustainability and long-term growth in the competitive AI landscape.

AI-Powered Copilot Introduces New Interactive Features

Screenshot: Pavandavuluri/ X

Microsoft has overhauled its Copilot AI assistant, adding voice and vision capabilities to make it more personalized. The updated Copilot offers a virtual news presenter, lets users interact with voice commands, and introduces Copilot Vision, allowing the AI to interpret what users see on webpages. New features like Copilot Daily provide audio news summaries, while "Think Deeper" delivers detailed, step-by-step answers. The redesign also emphasizes a more card-based, customizable interface. Available on mobile, web, and Windows, these features initially roll out in English-speaking countries, with plans to expand globally. Microsoft aims to create a dynamic AI companion that evolves with users, supporting tasks from shopping to complex decision-making.

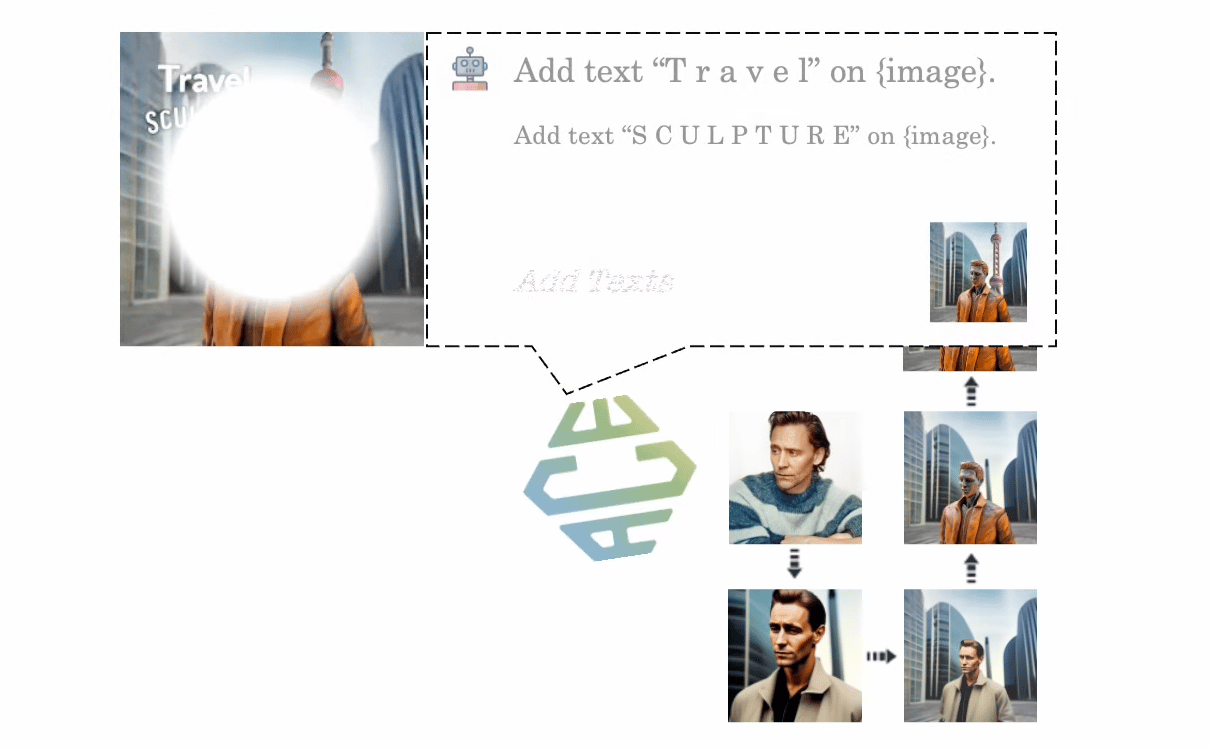

ACE: A Unified Model for Visual Creation and Editing

Screenshot: Github

Alibaba has unveiled ACE, an advanced "All-round Creator and Editor" AI model designed for visual generation and editing tasks. Unlike existing diffusion models, which focus primarily on text-guided visual generation, ACE supports multi-modal conditions, enabling it to perform a wide range of visual editing tasks. It introduces the Long-context Condition Unit (LCU), a unified input format, and uses a Transformer-based diffusion approach for joint training across multiple tasks. ACE also features an innovative data collection method that pairs images with accurate textual instructions using a fine-tuned multi-modal model. Benchmark results show ACE’s superior performance in visual generation, and its all-in-one capabilities enable a seamless multi-modal chat system for interactive image creation, eliminating complex pipelines.

Pinterest Rolls Out AI Tools for Advertisers

Source: Pinterest

Pinterest has introduced new generative AI tools for advertisers, allowing them to enhance product imagery by transforming blank or flat backgrounds into lifestyle scenes. Part of its Pinterest Performance+ suite, the AI tools aim to increase clickthrough rates and boost engagement. Early tests with Walgreens saw a 55% higher clickthrough rate and 13% lower cost-per-click using AI-generated backgrounds. Additionally, automation features now require 50% less input for campaign creation, with advertisers reporting a 64% decrease in cost per action and a 30% increase in conversion rates. Pinterest is also rolling out new Promotions tools, offering personalized discounts in several countries, and updating its bidding system to optimize for value instead of just clicks.

Heads Up

Pika has launched Pika 1.5, featuring new "Pikaffects" special effects that allow imagery subjects to transform into surreal, physics-defying, and highly malleable versions of themselves.

Google DeepMind's new Self-Correction via Reinforcement Learning (SCoRe) technique improves LLMs' self-correction abilities, boosting robustness, reliability, and enhancing reasoning with self-generated data.

Helm.ai launches VidGen-2, a next-gen generative AI model delivering 2X higher resolution, improved realism, and multi-camera support for autonomous driving development and validation.

Equinix raised $15 billion to expand its xScale data centers for AI, focusing on U.S. investments to support growing demand for large-scale infrastructure and server capacity.

Oracle plans to invest over $6.5 billion in its first public cloud region in Malaysia, joining global tech giants boosting AI-driven infrastructure in Southeast Asia.

Cerebras Systems, an AI chipmaker challenging Nvidia, filed for an IPO to raise $600 million, planning to list on Nasdaq with Citigroup and Barclays as underwriters.

Resolve AI, a startup focused on automating software operations, raised $35 million in seed funding led by Greylock to develop AI-powered tools for software engineers.

Durk Kingma, OpenAI co-founder, announced he's joining Anthropic, working remotely from the Netherlands, but hasn't disclosed his specific role or team within the company.

AI Solution

Stanford's Archon Boosts LLM Efficiency and Performance

Stanford University's Scaling Intelligence Lab has introduced Archon, a new inference framework designed to enhance large language models (LLMs) without additional training. Archon uses an inference-time architecture search (ITAS) algorithm to optimize LLM performance, making it both model-agnostic and open-source. Key components like the Generator, Fuser, Ranker, and Critic work together to refine potential responses faster and more efficiently. In tests, Archon outperformed top models like GPT-4o and Claude 3.5 by 15.1 percentage points. However, it works best with models of 70 billion parameters or more and is less effective for smaller models or single-query tasks. Despite limitations, Archon promises to reduce costs and improve LLMs' generalization capabilities in complex tasks.